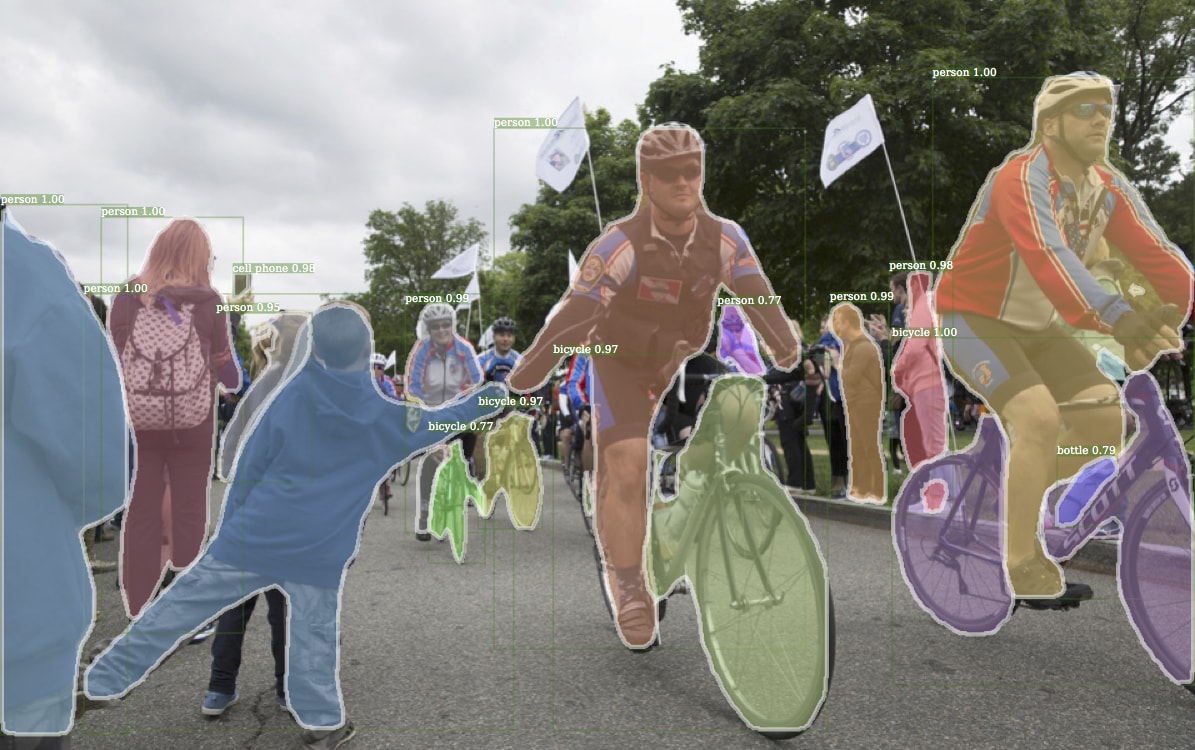

A few weeks ago, Facebook open-sourced its platform for object detection research, which they are calling Detectron. Object detection, wherein a machine learning algorithm detects the coordinates of objects in images, remains an ongoing challenge. To find algorithms that provide both sufficient speed and high accuracy is far from a solved problem. Detectron’s ostensible purpose is to move a bit closer to that goal using community-led contributions. Google did something similar last year when they released the Tensorflow Object Detection API, which Filestack utilizes for its object detection models, so we thought we’d take a look at Detectron and see how it compares.

Detectron Facts

While Detectron could, in theory, be used out-of-the-box to detect general objects (the baseline for most detection models is a dataset called Common Objects in Context (COCO). Detectron does not appear to be designed for that. And if that’s truly all you need, there are plenty of other, easier solutions you could get running. What industry people, as opposed to researchers, typically want is to train and run inference on their own datasets. If this is you, prepare for a long trek to achieve this goal using Detectron.

Indeed, you’ll find few, if any, scripts or tutorials in the documentation explaining how to train on your datasets, how to modify the code to suit your needs, or even how to preprocess your data. When the README emphasizes “for research“, you can be sure they are serious. Facebook has given little effort to make this resemble a “production” library, which is of course entirely by design. It’s in Facebook’s best interest to encourage open-source contributions to improve the state-of-the-art without necessarily making it simple for others to use their platform out-of-the-box.

Training Your Own Dataset

If you want to use your own data and classes to train custom models on any number of the many architectures Facebook provides, you’ll need to prepare to get your hands dirty. All training annotations must be in the COCO format, and you will be mostly on your own converting them. You then must hard code your database into the “database catalog”, build a configuration file that works for your setup (and Detectron currently only runs on the GPU), train your model, and then modify all of the inference code in order to load your dataset into the evaluation scripts and integrate with your product. This proved to be so time consuming I effectively had to stop, realizing this is simply not what the library is designed for. You may have better success, but the focus is clearly on improving COCO baselines, not aiding the development of custom models.

Comparisons to Tensorflow Object Detection API

On the contrary, while anyone who works with me knows my experience with the Tensorflow Object Detection API has been less than smooth, it does provide a number of helpful tools, an abundance of documentation, and even Google-hosted tutorials on how to train with your own datasets on the Google ML engine. I won’t say it’s “simple” to get started with, because it’s not and you should be prepared to make lots of code modifications to get it running (and possibly breaking changes whenever Tensorflow is updated), but there’s a much clearer path to success than with Detectron. It is, in large part, designed to be used in production.

That being said, Detectron is smaller, contains a Docker file that can serve as a base for training and inference (which is great, since Python dependencies get hairy very quickly), and is pretty easy to parse. If you are interested in delving into the cutting-edge of object detection, including Retinanet, which is an exciting new architecture, Detectron will provide plenty of tools to play with. But it’s not for the faint of heart, and not a panacea for those looking to train detection models for production.

It’s important to remember that automated machine learning training and infrastructure has not had the same time to blossom as other tools in IT. Amazon now has Sagemaker, Google is hinting at AutoML, and every month new tools are released that bridge the gap between research and implementation. It’s an exciting time to be working with machine learning, although it also sometimes requires an abundance of patience.

Filestack is a dynamic team dedicated to revolutionizing file uploads and management for web and mobile applications. Our user-friendly API seamlessly integrates with major cloud services, offering developers a reliable and efficient file handling experience.

Read More →