Deep learning has gone from sci-fi concept to industry buzzword within only a matter of years. What started as proof-of-concepts and enhanced facial recognition software quickly ballooned into state-of-the-art image and speech recognition, video analysis, behavioral predictions and untold other successes, where the goal was specific enough and the dataset large enough to benefit from deep models.

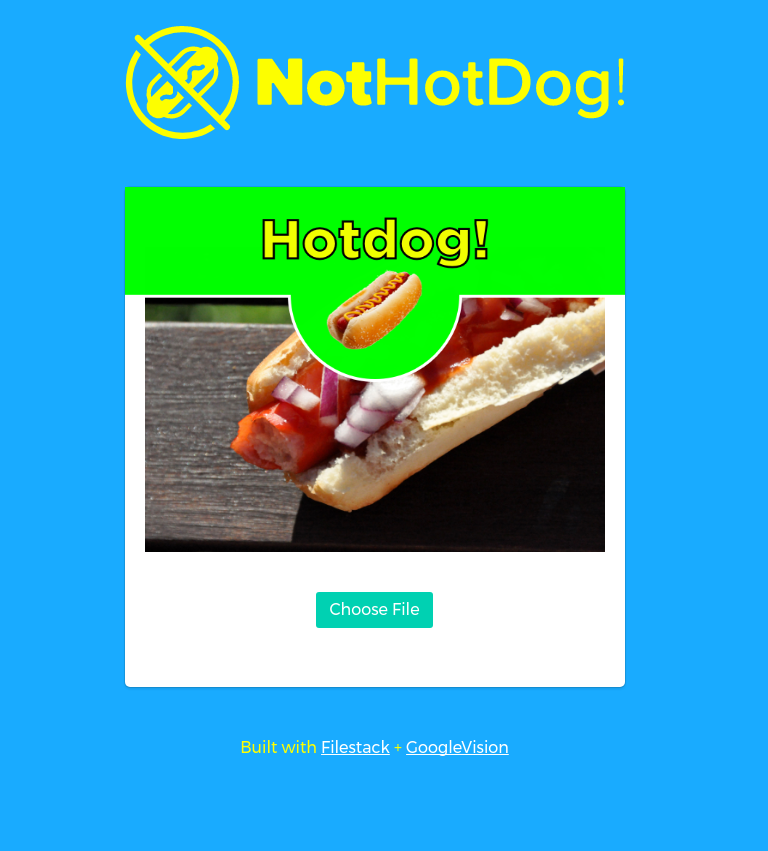

The Inspiration: Silicon Valley’s Not Hotdog App

With modern algorithms such as feed-forward convolutional networks, recurrent neural networks and deep reinforcement learning, the possibilities are endless. Scanning for cancer and neurological diseases. Using live video footage to predict crimes before they happen. Robots and cars that can analyze their environment and learn without supervision.

Or finding out whether or not something is a hotdog.

That’s the joke featured in Silicon Valley’s season 4, where a developer tasked with creating an app that can identify food ends up creating an app that can identify only whether that food is a hotdog or not.

Replicating the Not Hotdog App at Filestack

That isn’t all, though. Turns out the Silicon Valley team actually created the app using real machine learning. By utilizing transfer learning (where you take a model that has already been trained and “transfer” that learning to a new domain, requiring less training time than if from scratch) of the SqueezeNet architecture, mobile Tensorflow, and React Native, they were able to create a fast, simple app that, yes, identifies whether an object is or is not a hotdog.

The process was detailed in a long article following the public release of the app. It’s the impressive creation of one developer with a GPU-powered machine, but one that still required a fair amount of machine learning knowledge and experience, including familiarity with various frameworks, image processing, statistical analysis, and so forth. Moreover, it took time.

Filestack’s Take: Creating the Not Hotdog App

At Filestack, we decided to replicate this with our own Not Hotdog app using our tagging service. The took less than an hour to code the initial Javascript, which utilizes both our File Picker and our image tagging API. In fact, it took longer to create the UI for the website than to code the actual logic.

Creating the Application

If you haven’t already, go snag an API key. It’s a free and easy signup.

The first step in this is creating a basic HTML file and add the File Picker script inside your head tag.

<script src="https://static.filestackapi.com/v3/filestack.js"></script>

You can create the actual input field and image container any way you wish. I used Bulma, which is a flexbox-based CSS framework, and some very minor custom CSS to align things properly.

The Javascript logic is fairly simple and straightforward. We create a button that when pressed opens the Picker, which has been set to only allow images to be uploaded from certain sources. When a user has completed an upload, the filehandle given back by Filestack is then used to hit our image tagging service (using a jQuery AJAX call), after first making a call to our SFW service (to ensure we keep this all family-friendly).

We then iterate through the returned tag keys. If any substring matches both “hot” and “dog” (there can be many variations of a tag, which is why we keyword search), then we have a hot dog. Awesome!

There would be more ways to extend this functionality. For instance, all we check for is if the “hot” and “dog” tag exists, but each tag also is associated with a certain amount of confidence. We could extend our application to have a threshold, so that only a certain amount of hotdog confidence triggers the positive response. It’s just the tip of the iceberg in how image tagging can create awesome new services and embolden existing ones.

Have fun testing out the application and head over to our GitHub demo repository to see how we did it. Happy tagging!

Filestack is a dynamic team dedicated to revolutionizing file uploads and management for web and mobile applications. Our user-friendly API seamlessly integrates with major cloud services, offering developers a reliable and efficient file handling experience.

Read More →